The world’s smallest supercomputer has been unveiled and it is small enough to fit in your pocket.

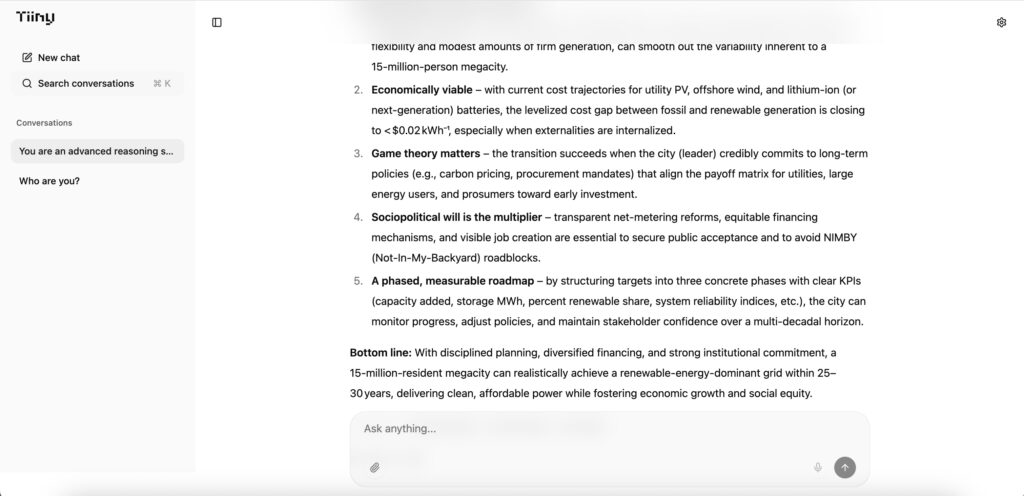

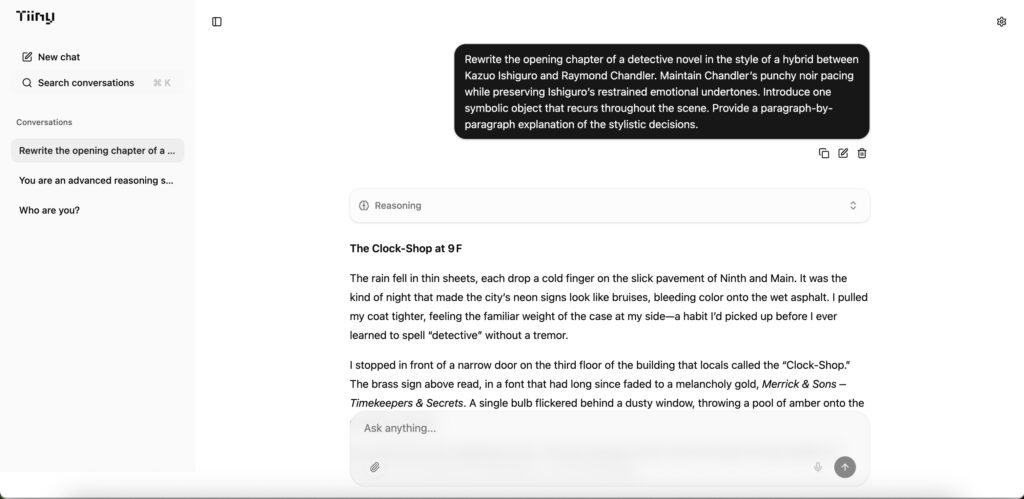

The Tiiny AI Pocket Lab is about the size of a mobile phone but is powerful enough to handle complex artificial intelligence tasks.

Despite its tiny size, the device is capable of autonomous problem solving, abstract reasoning and strategic planning.

READ MORE: Harpoon gun used in classic movie Jaws on sale for £370,000

- Advertisement -

It can run a massive 120-billion-parameter large language model without needing cloud connectivity, servers or high-end GPUs.

The pocket-sized powerhouse packs a huge 80GB of RAM.

By comparison, most modern laptops come with between 8GB and 32GB of RAM.

Of that total, 48GB is reserved purely for the neural processing unit, or NPU, a chip specifically designed for AI calculations.

The Pocket Lab is classed as a supercomputer rather than a mini PC or workstation because of its computing power.

It can run workloads, particularly local inference on language models with more than 100 billion parameters, which would normally require multi-GPU data centre systems.

- Advertisement -

The device is designed to support a wide range of personal AI uses for developers, researchers, creators, professionals and students.

It also holds the Guinness World Record for the smallest AI computer.

According to Tiiny AI, the device’s lower power use and lack of reliance on cloud services are key advantages, as reported by What’s The Jam.

- Advertisement -

Samar Bhoj, of Tiiny AI, said: “Cloud AI has brought remarkable progress, but it also created dependency, vulnerability, and sustainability challenges.

“With Tiiny AI Pocket Lab, we believe intelligence shouldn’t belong to data centers, but to people.

“This is the first step toward making advanced AI truly accessible, private, and personal, by bringing the power of large models from the cloud to every individual device.”

The Tiiny AI Pocket Lab is powered by two major technology breakthroughs designed to make huge AI models viable on such a compact device.

TurboSparse is a neuron-level sparse activation technique that improves efficiency while maintaining full model intelligence.

PowerInfer is an open source heterogeneous inference engine with more than 8,000 GitHub stars.

It accelerates heavy large language model workloads by dynamically distributing computing tasks across the CPU and NPU, enabling server grade performance while using far less power.

READ MORE: One-of-a-kind Ferrari specially made for Jamiroquai frontman Jay Kay has gone on sale for nearly £4m